ESG Assurance Readiness: Preparing Evidence Systems for ISSB and CSRD Audits

ESG Assurance Readiness: Preparing Evidence Systems for ISSB and CSRD Audits

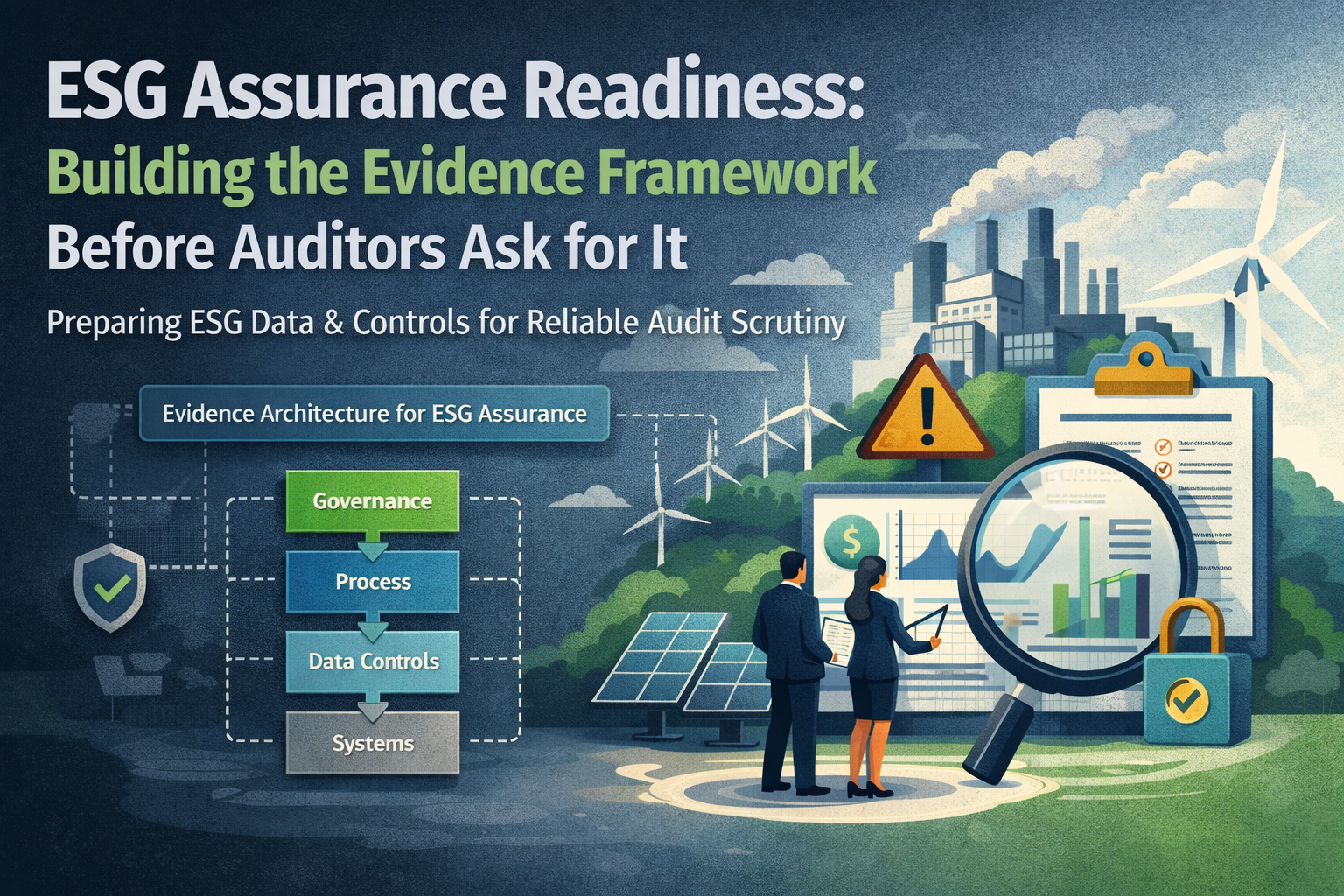

Globally, businesses are finding that the ESG landscape is rapidly evolving. As ESG reporting and disclosures continue to gain traction, a key insight is emerging: robust disclosure is not a replacement for good evidence. As such, management and audit committees are facing increasing scrutiny from regulators and key stakeholders to produce ESG assessment-ready information that is comprehensive, reproducible, objective, and holds strong evidentiary value. Usually, at the time of assessment, auditors raise key questions regarding the source of the data, the processes that they have undergone, the different controls that they have been subjected to, and the key assumptions that underlie the data.

Over the next few sections, this article examines the taxonomy behind evidence, what is an appropriate sampling framework for assurance readiness, controls that align with auditor expectations, and how to address auditor queries effectively and efficiently.

Evidence taxonomy: what is important

Before we set out the true importance of evidence, it is worth examining what defines an acceptable piece of evidence. Regulators such as the UK Financial Reporting Council [FRC] and the European Securities and Markets Authority [ESMA] have identified key guidelines that help _ what evidence must cover:

- Evidence should be linkable to a specific assertion.

- Typically, evidence should be possible to reproduce without the need to reconstruct

- They should be traceable across governance and control mechanisms across the value chain.

- They must be suitably stored, archived, and capable of being queried as required.

The overall body of evidence can be classified into certain key areas, such as:

- Source system outputs: these are typically raw data dumps from business-critical systems such as ERP exports, meter snapshots, information from procurement and supply chain systems, HR MMS extracts like head count information, and even data from environmental and monitoring systems. These outputs drive auditory importance because they originate from a defined source system and are not merely an isolated spreadsheet with questionable provenance. It has been observed that in climate-related assessments, the FRC has called out organizations whose data disclosures could not be traced back to identifiable system sources. As such, having traceable source system data is a key piece of non-negotiable evidence.

- Transformation logs: every time data is processed and transformed using business logic such as allocations, calculations, or even estimations, the logic should be well documented, version controlled approved, and be backed by adequate change logs. Key examples cover calculation logic for emissions, allocation keys for shared assets, and even management adjustments to energy utilization information. This is particularly important because in the absence of clear documentation, assessors tend to treat outputs as raw estimates without adequate supporting information. In fact, research around accounting assurance activities has shown that most failures result from an absence of transformation logic that is clear, objective, and well-documented.

- Control execution records: Evidence of controls being effective should be clearly captured and not simply implied. What this means is there should be appropriate evidence of approval workflows, exception logs, access control logs, and checks and balances being implemented in practice. It is important that there is sufficient evidence of ESG reporting controls that have been executed and not simply exist on paper.

- Review and challenge evidence: At the time of assessment, auditors test the actual execution to see if the logic has been appropriately reviewed. They also want to see evidence of who has reviewed it, what questions were raised at the time of review, and whether any discrepancies were found. Simply having a binary checkbox of approval may not be appropriate to show that sufficient checks and balances have been put in place to document the challenge and response.

- Governance artifacts: these typically include evidence for version control of policy documents, operational playbooks, business methodology manuals, and even management oversight evidence. Regulators are increasingly laying emphasis on governance artifacts as they are clear evidence of design processes and not merely disclosure-based outputs.

Sampling logic: evidence that is conclusive

Assurance partners do not test large data sets at the time of assessment; instead, what they rely on is sampling logic that is designed to ensure adequate risk-based coverage. The sampling approach is both defensible and transparent; as such, using a need-based sampling approach helps to keep the assessment on track while also optimising management resources. Some key sampling issues to keep in mind are as follows:

- Risk-based versus convenience sampling: the wildest convenience sampling is usually subjective and depends upon samples that are chosen out of ease-of-use. Auditors mostly reject this approach at the time of assessment. Instead, risk-based sampling is more scientific because it allocates resources to areas where data is subjective, the overall population is large, and assumptions have a material impact. This is a similar approach that is taken during financial audits as well, and helps to mitigate the overall risk. For instance, in scope 3 emissions, supplier estimates are more subjective than actual fuel usage; as such, they need a heavier sampling set.

- Metric volatility: metrics that vary widely across time periods or across business segments require a larger sampling set to understand underlying drivers. For instance, year-on-year changes in biodiversity impact areas or energy usage across different business segments need to have wider sampling sets.

- Volume of underlying data sources: usually, as the number of data sources goes up, the sample size also increases. In areas where there are multiple integration touch points, wide geographic dispersion, and a variety of historic issues, the sampling intensity needs to be tailored accordingly.

A sample logic approach can be to understand the volume of suppliers and the criticality of data. For instance, if there are 10,000 suppliers contributing scope 3 category one data, a typical approach might be to first segment the suppliers by their spend and leverage data of the top 10% suppliers who contribute 80% of coverage, randomly sample the next 20%, and finally take a few of the tail-end suppliers as a representative subset. This approach is both defensible and objective for the auditor at the time of final assessment.

Control narratives: what assurance providers look for at the time of assessment

Auditors do not simply look for data points and process checklists. They read between the lines to understand the control narratives that are the intent behind the actual business processes. A robust control narrative usually explains why a control exists, who executes it when it has been run, and even clarifies the actual evidence that is produced at the end. The narrative itself drives control-based testing and is not simply a record that the controls exist.

There are some key attributes that qualify a good control narrative:

- Control objective: the control must clearly articulate the risk through accuracy, completeness, and consistency.

- Control description: the logic must be robust and cover data fields, validation, acceptance logic, and exception handling.

- The control must have clearly defined roles, responsibilities, and authority structures.

- There must be appropriate evidence of the control, such as workflow logs, time stamps of approval, and reviewer comments, if any.

For example, a control objective can be to ensure that scope one emissions data is complete, consistent, and accurate. To support this, the control description needs to extract daily usage log from appropriate devices such as meters, connect them through API’s, validate them against predefined business logic, and raise any alarm if an exception occurs. The typical execution owner could be an operational data steward, and the evidence that is expected to be produced at the end covers artifacts such as API logs, exception workflows, and authorization time stamps.

Managing auditor queries

Usually, it is seen that auditor queries can be classified into some predictable areas, such as missing evidence, inconsistent metadata, inadequate transformation logic, and the absence of change control mechanisms. If the business operations are defined keeping the evidence-first approach in mind, then queries from auditors become opportunities to demonstrate control. A key best practice for organizations to follow is to hold regular mock assurance sessions before the actual assessment. In addition, keeping a log of prior assurance activities with queries raised and responses provided is often found helpful. This, in fact, reinforces management commitment to assurance grade exercises and can go a long way in driving the desired outcomes.

It is now being found that some organizations keep some assurance-ready evidence packs, which include an inventory of the evidence, control narratives, transformation logic mapping, and a traceability matrix to back the evidence. This approach greatly reduces assurance level rework and delivers robust outcomes.

Difference between narrative and evidence-based claims

A key problem that is found during the final years of reporting is a mix of narrative with actual evidence. While narrative claims typically provide statements such as aim to reduce emissions by 50% in the next 5 years, promoting supplier sustainability etcetera these are typically expressions of management’s intent and not actual evidence.

What is actual assurance ready evidence is quantifiable and empirical data points, such as X percent reduction in actual emissions with proof of the source data, calculation documentation, and actual business logic. Assurance providers typically want to understand the evidence behind the claim, how it was recorded, approved, and whether it can be retrieved. As such, narratives by themselves are simply not enough and need to be backed by objective, non-negotiable evidence.

It has been found that FRCS sectoral reviews have often found weakness in evidence traceability as one of the most common reasons why climate-based audits have been judged poorly. In fact, the ESMAS enforcement guidelines have clearly set out directions to explain the evidence, the change in methodology assumptions, and data boundaries as opposed to a simple disclosure. The SCS climate disclosure commentary has repeatedly laid emphasis on assurance, by appropriate governance, evidence, and control mechanisms, and not simply narrative claims.

Being ready for assurance is more than just a simple reporting checklist. It is a framework of evidence and controls that must cover the actual taxonomy sampling logic, control narratives, and proactive management of potential auditor queries. Once the organization is able to distinguish between narrative claims and actual evidence through traceable, non-negotiable, and objective numbers, it becomes an organizational discipline that will hold the organization in good standing for all time to come.

Learn more about our : Carbon Footprint Platform

Learn more about – Scope 1, 2 and 3 here..